BY STAS MARGARONIS, RBTUS

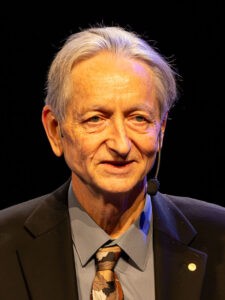

Geoffrey Hinton, a 2024 Nobel Prize winner, is now warning that AI is in the process of developing a super-intelligence capability that poses a threat to humans. He warns about the threat of mass-unemployment, increased cyber-attacks as well as biological warfare mounted by an AI technology run for profit and for military aggrandizement.

In May 2023, Hinton announced his resignation from Google to be able to “freely speak out about the risks of A.I.” He has voiced concerns about deliberate misuse by malicious actors, technological unemployment, and existential risk from artificial general intelligence. He noted that establishing safety guidelines will require cooperation among those competing in use of AI in order to avoid the worst outcomes. After receiving the Nobel Prize, he called for urgent research into AI safety to figure out how to control AI systems smarter than humans.[1]

He is the author of Neural Network Architectures for Artificial Intelligence

The threat was made even more dramatically posed by Jesse Van Griensven, adjunct professor at the University of Waterloo in Canada and executive chairman of quantum cybersecurity company EigenQ. In an interview with Nikkei Asia. He used a bank robbery analogy to describe the scale of next generation quantum computing that will turbocharge the capabilities of AI: “With today’s computers, somebody hacks your account and your money is gone. With quantum computers, the money from the whole bank is gone.” Such machines could disable airports, power plants, telecom networks and military forces, reducing the United States “to the Stone Age” without firing a single bullet, he said.[2]

In an interview with British entrepreneur Steven Bartlett in his “Diary of a CEO” series, Hinton warned that AI’s ability to surpass humans and develop super-intelligent AI could be “ten to twenty years away.”[3] Asked what life would be like for humans afterwards, he replied: “Ask a chicken.”

BACKGROUND

Hinton described the concept he pursued to support AI development which was to “…simulate a network of brain cells on a computer and try and figure out how you would learn strengths of connections between brain cells so that it learned to do complicated things like recognize objects and images or recognize speech or even do reasoning. I pushed that approach for, like, 50 years because so few people believed in it. There weren’t many good universities that had groups that did that. So, if you did that, the best young students who believed in that came and worked with you. So, I was very fortunate in getting a whole lot of really good students.”

However, there were risks with AI that became obvious with the arrival of ChatGPT: “Some of the risks were always very obvious. Like people would use AI to make autonomous lethal weapons. That is, things that go around deciding by themselves who to kill. Other risks, like the idea that they will one day get smarter than us and maybe (we) would become irrelevant … I was slow to recognize … other people recognized it 20 years ago. I only recognized it a few years ago that … was a real risk … Remember, neural networks 20, 30 years ago were very primitive in what they could do. They were nowhere near as good as humans.”

Hinton said the threat became clearer with ChatGPT: “It changed for the general population when ChatGPT came out. It changed for me when I realized that the kinds of digital intelligences we’re making have something that makes them far superior to the kind of biological intelligence we have.”

Hinton laid out two areas of risk: “There’s risks that come from people misusing AI … And then there’s risks that come from AI getting super smart and saying it doesn’t need us. And I talk mainly about that second risk because lots of people say: ‘Is that a real risk?’ And yes, it is. Now, we don’t know how much of a risk it is. We’ve never been in that situation before. We’ve never had to deal with things smarter than us … And anybody who tells you they know … what is going to happen and how to deal with it, they’re talking nonsense.”

The development of new AI driven weapons is so compelling for governments that they are overlooking the broader threat to humanity: “With AI, it’s good for many, many things. It’s going to be magnificent in healthcare and education and more or less any industry that needs to use its data is going to be able to use it better with … AI. So, we’re not going to stop the development … Also, we are not going to stop it because it’s good for battle robots. And none of the countries that sell weapons are going to want to stop it …European regulations have a clause in them that say none of these regulations apply to military uses of AI. So, governments are willing to regulate companies and people, but they are not willing to regulate themselves.”

Finally, companies investing AI to cut costs are so driven by the lure of increased profitability, masked as productivity gains, that there is no incentive to regulate against dangerous development: “We’ve got capitalism, which is done very nicely by us, and it’s produced lots of goods … and services for us. But these big companies, they’re legally required to try and maximize profits, and that’s not what you want from the people developing this stuff.”

THREATS TO HUMANITY

Hinton described several areas of threats to humanity:

1)Increased cyber-attacks: “Let’s talk about all the risks from bad human actors using AI…There’s cyber-attacks. So, between 2023 and 2024, they increased by about a factor of 12, 1,200% … AI is very patient, so they can go through 100 million lines of code looking for known ways of attacking them. That’s easy to do, but they can get more creative …Some people who know a lot believe that maybe by 2030, they’ll be creating new kinds of cyber-attacks which no person ever thought of. So that’s very worrisome because they can.”

For example, a San Francisco-based company, Anthropic, disclosed that its Claude code was used by one threat actor to launch attacks impacting 17 organizations with minimal technical expertise. Anthropic’s Threat Intelligence Report for August 2025 disclosed: “This threat actor leveraged Claude’s code execution environment to automate reconnaissance, credential harvesting, and network penetration at scale, potentially affecting at least 17 distinct organizations in just the last month across government, healthcare, emergency services, and religious institutions that were used to create and sell ‘no code’ ransomware and scale data extortion campaigns.”[4]

2)AI threatens jobs. Hinton believes that companies are already shedding jobs and replacing humans with AI in areas such as call centers: This threat is validated by a recent Massachusetts Institute of Technology study that found that artificial intelligence can already replace 11.7% of the U.S. labor market, or as much as $1.2 trillion in wages across finance, health care and professional services.[5]

3)Advances in medical research have the downside that AI can also be used to develop COVID-type viruses and perhaps more lethal ones:

“One guy who knows a little bit of molecular biology, knows a lot about AI and just wants to destroy the world. You can now create new viruses relatively cheaply using AI, and you don’t have to be a very skilled molecular biologist to do it. And that’s very scary. So, you could have a small cult, for example. A small cult might be able to raise a few million dollars. For a few million dollars, they might be able to design a whole bunch of viruses.”

Governments looking at viruses would have to worry about retaliation: “They’d be worried about retaliation. They’d be worried about other governments doing the same to them. Hopefully, that would help keep it under control. They might also be worried about the virus spreading to their country…”

4) AI can be used to corrupt elections. Hinton says this was heightened by the acquisition by Elon Musk and the Trump administration of confidential personal data in 2025: “Anybody who wanted to use AI for corrupting elections would try and get as much data as they could about everybody in the electorate. With that in mind, it’s a bit worrying what Musk is doing at present in the States, going in and insisting on getting access to all these things that were very carefully siloed. The claim is it’s to make things more efficient, but it’s exactly what you would want if you intended to corrupt the next election. You get all this data on people, … how much they make, … everything about them. Once you know that, it’s very easy to manipulate them because you can make an AI that you can send messages that they’ll find very convincing, telling them not to vote, for example. So, … I wouldn’t be surprised if part of the motivation of getting all this data from American government sources is to corrupt elections.”

PROFITS BLINDING COMPANIES & GOVERNMENTS TO AI’S THREAT

Unfortunately, a common theme in many of the outcomes Hinton describes is that it AI applications increase profitability for companies even though the long-term AI developments can harm people:

“So, once you get to a situation where … to make more profit, the company starts doing things that are very bad for society, like showing you things that are more and more extreme. That’s what regulations are for. So, you need regulations. With capitalism now, companies will always say, regulations get in the way, make us less efficient. And that’s true. The whole point of regulations is to stop them doing things to make profit that hurt society. And we need strong regulation.”

For example, Anthropic demonstrated a new technique to prevent users from eliciting harmful content from its models. Anthropic has been trying to build a safer AI product but such technology increases costs as a Financial Times report noted: “However, adding these protections also incurs extra costs for companies already paying huge sums for computing power required to train and run models. Anthropic said the classifier would amount to a nearly 24 per cent increase in “inference overhead”, the costs of running the models.”[6]

Hinton explained that AI systems, through its digital tools, already surpasses the human mind so that super-intelligence, in which humans will become irrelevant, is perhaps only 10-20 years away: “AI is already better than us at a lot of things … like chess, for example, AI is so much better than us that people will never beat those things again. Maybe the occasional win, but basically they’ll never be comparable again … Something like GPT4 knows thousands of times more than you do. There’s a few areas in which your knowledge is better than its, and in almost all areas, it just knows more than you do. It might be 50 years away. That’s still a possibility that It might be that somehow training on human data limits you to not being much smarter than humans. My guess is between 10 and 20.”

The fundamental issue is that human learning is now inferior to machine learning: “Remember that AI, when it’s training, is using code, and if it can modify its own code, then it gets quite scary. It can change itself in a way we can’t change ourselves. We can’t change our innate endowment …There’s nothing about itself that it couldn’t change.”

As to stopping companies and governments from regulating AI, Hinton is not hopeful: “It’s going to be decided by whether the lobbyists … can be kept under control. I don’t think there’s much people can do… except for try and pressure their governments to force the big companies to work on AI safety. That, they can do.”

FOOTNOTES

[1] https://en.wikipedia.org/wiki/Geoffrey_Hinton

[2] https://asia.nikkei.com/spotlight/cybersecurity/china-s-quantum-leap-will-eclipse-us-aircraft-carriers-analysts-say

[3] https://www.youtube.com/watch?v=giT0ytynSqg

[4]https://www.ajot.com/insights/full/ai-anthropic-says-its-ai-claude-code-was-used-to-attack-17-organizations-in-one-month

[5]https://www.cnbc.com/2025/11/26/mit-study-finds-ai-can-already-replace-11point7percent-of-us-workforce.html#:~:text=Massachusetts%20Institute%20of%20Technology%20on,health%20care%20and%20professional%20services.

[6] https://www.ft.com/content/cf11ebd8-aa0b-4ed4-945b-a5d4401d186e